I am a senior researcher at the Electronics and Telecommunications Research Institute (ETRI) and

a concurrent associate professor at UST (University of Science and Technology), South Korea.

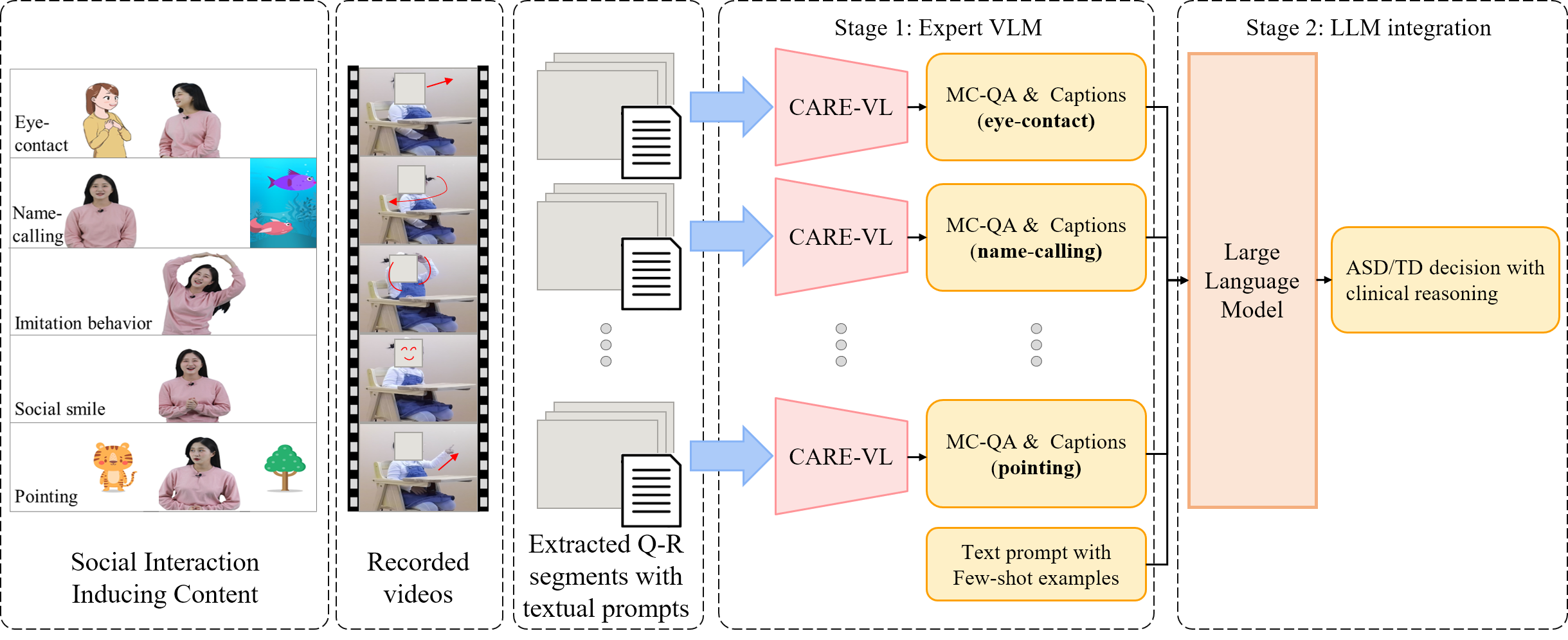

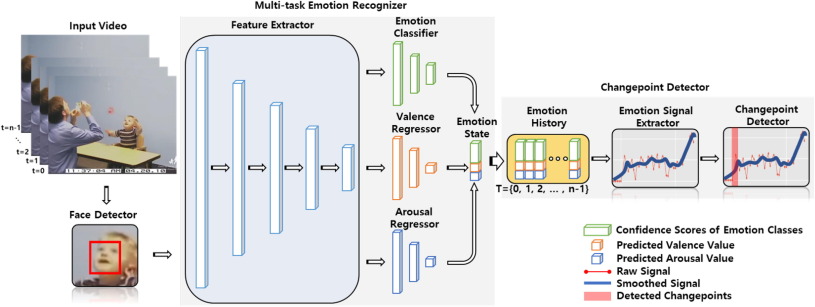

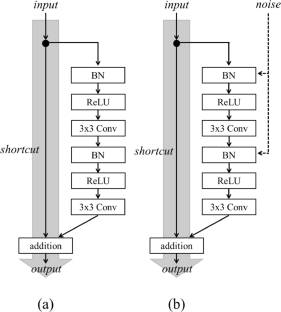

My current research focuses on Vision-Language Models (VLM) and Vision-Language-Action

(VLA) models,

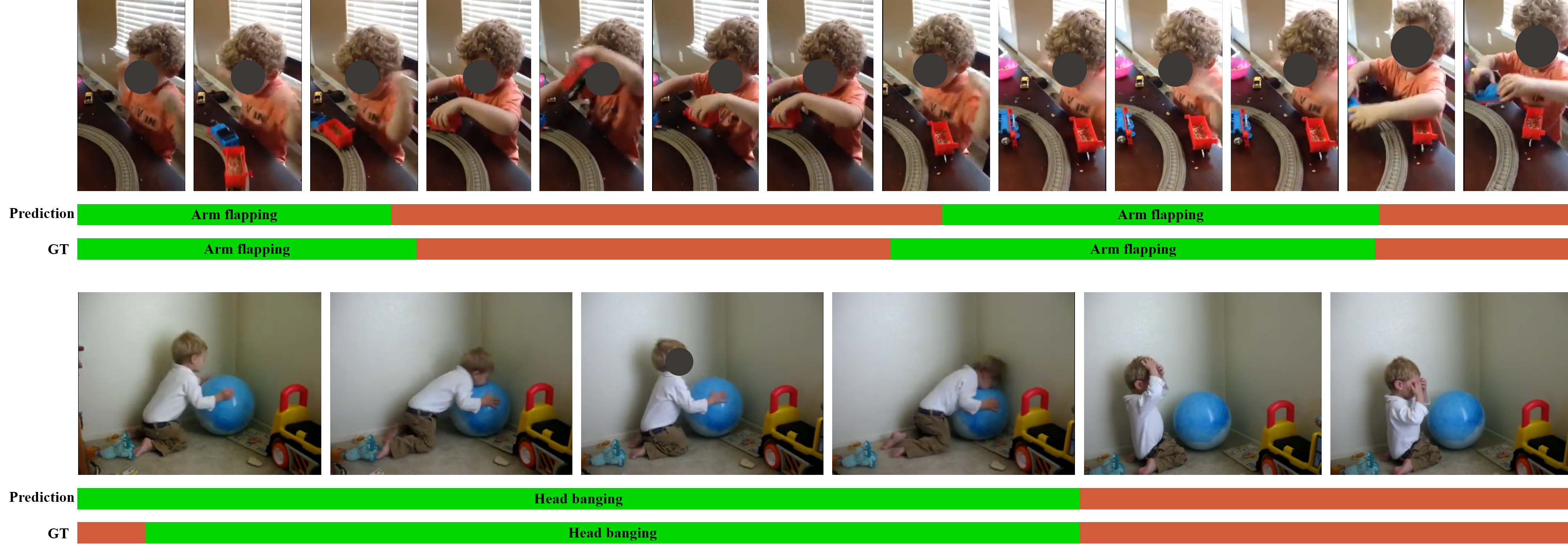

with broader interests in computer vision, deep learning, and their applications in robotics and healthcare.

We are developing Vision-Language-Action models that enable robots to understand natural language instructions and autonomously plan and execute manipulation tasks. By bridging vision, language, and action spaces, our goal is to build general-purpose robot intelligence that can adapt to diverse real-world environments.

Extending VLA models to whole-body humanoid control, we are exploring methods for coordinating full-body locomotion and manipulation through unified vision-language-action frameworks. This research aims to enable humanoid robots to perform complex, multi-step tasks in unstructured environments.

For a complete list of publications, visit my Google Scholar profile.